My last Christmas Eve in Warsaw —

the gray, uncertain day

dying into the early dark.

We wait for the first star, then light

the twelve skinny candles on the tree

and break the wishing wafer.

Holding a jagged shard of a wish,

mother intones: “Health and success,

fulfillment of all dreams.”

Kissing on both cheeks,

we break the wafer each with each.

So begins Wigilia,

the supper of Christmas Eve.

The number of the dishes

has to be odd: spicy red borscht

with uszka, “little ears” —

pierogi with cabbage and wild mushrooms

soaked back to dark flesh

from the pungent wreaths;

fish — the humble carp;

potatoes, a compote from dried fruit,

and poppy-seed cake.

Father counts: “If it doesn’t

come out right, we can always

include tea.”

He drops a pierog

on the starched tablecloth.

I stifle laughter as he picks it up

solemnly like a communion host.

On the fragrant, flammable tree,

angel-hair trembles in silver drafts.

Then we turn off the electric lights.

Now only the candles glow

in a heavenly hush,

though I no longer believe in heaven.

Father sets a match

to the “cold fires.” Icy starbursts hiss

over the staggered pyramid of gifts:

slippers and scarves, a warm skirt,

socks and more socks,

a book I will not finish.

We no longer sing carols,

mother playing the piano —

the piano sold by then,

a TV set in its place.

Later, unusual for a Christmas Eve,

we go for a walk. The streets

are empty; a few passers-by

like grainy figures in an old movie.

It begins to snow.

I never saw such tenderness —

snowflakes like moths of light

soothing the bare branches,

glimmering across

hazy halos of street lamps.

Each weightless as a wish,

snowflakes kiss our cheeks.

They settle on the benches and railings,

on the square roofs of kiosks —

on the peaceful,

finally forgiven city.

~ OrianaTHE GHOST OF CHRISTMAS PAST

Oriana:

For me Christmas had always been about the special food on Christmas Eve (which in Polish is not called “Christmas Eve” but “vigil” [Wigilia] —see my poem “Cold Fires” on this page), the evergreen tree (which in Polish is not called a “Christmas tree”) and its scent — ah, the scent! — this holiday was very much about the scent of an evergreen), the gifts, the tree lights and ornaments, the wishes, the nice clothes, the carols, the family warmth and coziness. Even back when I did go to church, that was not the important part of the holiday. If food was a “ten”, then church was a “one.”

So when I stopped going to church on Christmas or any other day, Christmas went on as before — defined by the special supper and gift-giving.

Nor did I miss anything. I left the nativity story as I left children's books — which I also didn’t miss, except maybe Winnie the Pooh.

God was the all-seeing, all-powerful tyrant to be feared, and the sweetness of the nativity creche did not obscure that. Not for me. I did like the animals though. They were the best part. They lent the most comfort and a momentary forgetting that here was a terrorist religion based on threats of hellfire. It was marvelous to drop that part and keep on enjoying the celebration.

So Christmas was basically secular from the start. But that doesn’t mean that I object to the crêche displays. True, at first it was a tad of a shock to discover that it was all a myth — not Mary’s virginity, since that part was obvious, but Bethlehem, the census, the fact that the “slaughter of the innocents” never took place but was invented to echo the slaughter of the first-born Egyptian infants, as later the flight into Egypt so that there could be a return from Egypt.

Or the heavy possibility that Jesus never even existed — at least not as presented in the Gospels. But even before I fully digested the made-up nature of it all, I was able to enjoy the stories as stories — the way I loved Greek myths, even with the cruelty inherent in many of them. “The Greeks really had great imagination,” I used to think — never mind the fusion from other traditions, never mind any scholarly examination.

And if someone says “Merry Christmas” to me — I say it back to them, knowing that to neither of us it’s about religion.

Mary:

The history of how the American Christmas developed is both interesting and surprising. It makes sense that the holiday gained significance and importance through the time of the civil war, when so many families were separated for so long, suffered so many losses, and the fabric of society itself was so threatened and tested. Home, peace and family were more precious and important than ever, and making the observation of Christmas a national holiday could contribute to recovery from the divisions and privations of war.

The secular celebration was already more important to unity than the religious observation. The transition from the old ways of observing Christmas, more dedicated to spiritual and religious matters, to the current form was like the Americanization of old world traditions. Immigrants would observe the old tradition, the Wigilia, the feast of the seven fishes, Little Christmas, the blessing of food in the church, the holy wafer at the feast. But as the generations follow, more and more of the old observations would disappear, and the most persistent, that remained the longest, would be most of the traditions around celebratory foods, often reserved only for this Season.

The crazy, and ugly, commercialization of Christmas is a modern and American unfortunate development. Not gift giving in itself, but the amping up of an old tradition to the kind of pressurized madness to spend and spend and spend, the kind of obscene scenarios on "Black Friday," the frantic greed and grab, the fact that retailers depend on Christmas buying for success...not only takes over but actually displaces many of the pleasures of the celebration. People go into debt, spend what they can’t afford, and it doesn't make anyone happier.

I always felt the heart of the season was a celebration of light. In religion, for Christians, the birth of Christ as the Light of the World. I love the lights of Christmas, the lighted trees, the lights strung up everywhere, shining in the dark. And the songs, the music. Those are the ways I like to celebrate...family, food, light, music. Gifts are always small and incidental, not the main point.

Oriana:

Yes, the “war on Christmas” has already been won — by the merchants. Not that the churches made any valiant effort to save Christmas from Mammon.

I stopped celebrating after my mother died. But even long before then, Christmas wasn't real Christmas anymore. At least you and I have memories.

**

“I'm dreaming of a wet Christmas” — this year, the dream came true in California. There could be no better Christmas gift than rain.

But speaking of the Ghost of Christmas Past, thirty years ago the red hammer-and-sickle flag was lowered down the Kremlin flagpole and the Soviet Union ceased to exist. Talk about a Christmas gift to the world! Putin called it “the greatest catastrophe of the Twentieth Century.”

This Youtube is a must-see (and hear — you'll hear some of the Soviet anthem, which has a massive grandeur)

https://www.youtube.com/watch?v=xak4CaM-Nvg

*

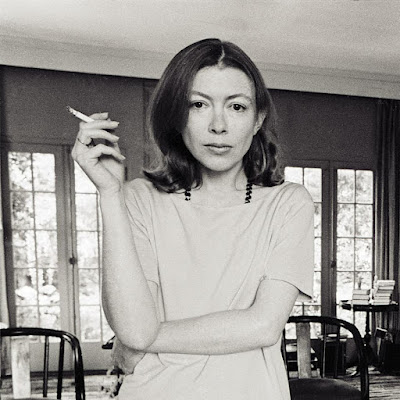

WE HAVE LOST JOAN DIDION

“We tell ourselves stories in order to live...We look for the sermon in the suicide, for the social or moral lesson in the murder of five. We interpret what we see, select the most workable of the multiple choices. We live entirely, especially if we are writers, by the imposition of a narrative line upon disparate images, by the ‘ideas’ with which we have learned to freeze the shifting phantasmagoria which is our actual experience.”

~ Joan Didion, The White Album

*

BALANCED ON THE VERGE OF A NERVOUS BREAKDOWN AND A NOBEL PRIZE

~ Joan Didion was a mood, the static in the air before an earthquake, the sense that your footing is crumbling beneath you. She started out as a John Wayne–worshipping Goldwater Republican and ended up criticizing U.S. foreign policy and debunking the case against the Central Park Five in the New York Review of Books. Her signature style, honed and hardened by disillusionment and a profound mistrust of sentimentality in all its forms, was both a repudiation of her roots and a veiled embrace of its frontier toughness. Descended from the survivors of the Donner Party, she prided herself on facing brutal truths, even when one of those truths is that the mythos that gave your ancestors the will to carry on was just another sham.

She produced a number of much-quoted lines, from “We tell ourselves stories in order to live” (not the bromide about the life-giving power of fiction it’s typically taken to be) and “Writers are always selling somebody out” (which is quintessential Didion). One of those lines, “Style is character,” from an interview with the Paris Review, seemed to capture her own ethos best. Her first real job was in New York, at Vogue, where, she always maintained, she learned to write with the utmost of compression and economy.

By the end of her career, Didion was as much an icon for her persona as for her writings, hired by Celine to model sunglasses in magazine ads and adored by young women writers for her chic sangfroid as well as her prose. Photos taken of Didion in Los Angeles in 1968 in a long dress, cigarette in hand, leaning against her yellow Corvette, captured an idea about female artistry, simultaneously fragile and impenetrable, balanced on the verge of a nervous breakdown and a Nobel Prize, that, while clearly no fun—photos of Didion smiling are vanishingly rare—nevertheless felt enviably cool.

In that same Paris Review interview, Didion explained that despite writing from her early childhood, at first she wanted to be an actress, like the main character of her best-known novel, Play It as It Lays. “It’s the same impulse,” she said. “It’s make-believe. It’s performance.” The great movie stars of Didion’s era exerted a particular kind of charisma that she, too, mastered. It’s the art of offering your whole self up to the camera or the page and yet also holding something back. Didion’s restraint and discipline as a writer served as more than just the container for the various species of chaos she wrote about. It also gestured toward the unsaid, the part of herself she kept forever in reserve.

Few actors can finesse this paradox, and even fewer writers. Didion herself didn’t always pull it off smoothly. After The Year of Magical Thinking, she published 2011’s Blue Nights, an account of her daughter’s death that, according to her biographer, Tracy Daugherty, attracted criticism for shying away from a full account of the cause of that death. That omission pointed, perhaps, toward where her true vulnerabilities lay.

Such slips were rare, however, and even in her confessional mode, Didion always felt elusive. That was what made us want to follow her across the assortment of blasted heaths she visited, surveyed, and dissected with the chilliest aplomb. There was the precision of her prose and then there was the mystery of the woman who chipped it out of ice, so pure and translucent it seemed like a natural phenomenon. Like all the best mysteries, it will never be solved. ~

https://slate.com/culture/2021/12/joan-didion-death-obituary-magical-thinking.html?fbclid=IwAR2oXV3NznWVuUyZNxYYGc4pMKkk_uvQSciUZ1hwV_zthDg76GfRCdUC8d4

Oriana:

Didion’s adoptive daughter, Quintana, died of acute pancreatitis, a disease most often caused by alcoholism. But I don’t think a mother should be condemned for not discussing her daughter’s alcoholism, especially while she’s still mourning both the loss of her husband and her daughter’s untimely death at only 39. Of course a mother would try to paint a sympathetic portrait of her child rather than hang the “alcoholic” label on her. Didion wanted the readers to appreciate other facets of Quintana’s personality. That is excusable in a mother.

On the other hand, Didion helped kindle the awareness that adopted children may be traumatized by the knowledge of having been adopted (i.e. given away by their genetic parents; is "abandonment" too strong a term? )— and that the match with adoptive parents is not always successful.

And we shouldn’t forget that Quintana’s death was a finale to a life marred by rapid mood changes, depression, and even suicidal despair:

“I had seen her wishing for death as she lay on the floor of her sitting room in Brentwood Park, the sitting room from which she had been able to look into the pink magnolia. Let me just be in the ground, she had kept sobbing. Let me just be in the ground and go to sleep.” ~ Joan Didion, Blue Nights

*

JOAN DIDION’S CONTROLLED ANXIETY

~ She was famous for her detached, sometimes elegiac tone, but returned to alienation and isolation throughout her career, whether she was exploring her own grief after the death of her husband John Gregory Dunne in the Pulitzer-winning The Year of Magical Thinking, the emptiness of Hollywood life in the novel Play It As It Lays, or expats caught up in central American politics in her novel A Book of Common Prayer.

Didion was born in Sacramento in 1934 and spent her early childhood free from the restrictions of school, with her father’s job in the army air corps taking the family countrywide. A “nervous” child with a tendency to headaches, Didion nonetheless began her path early, starting her first notebook when she was five. While her father was stationed in Colorado Springs, she took to walking around the psychiatric hospital that backed on to their home garden, recording conversations that she’d later work into stories. In a 2003 interview with the Guardian, she recalled an incident when she was 10: while writing a story about a woman who killed herself by walking into the ocean, she “wanted to know what it would feel like, so I could describe it” and almost drowned on a California beach. She never told her parents. (“I think the adults were playing cards.”)

From reading Ernest Hemingway and Henry James, she learned to dedicate time to crafting a perfect sentence; she taught herself to use a typewriter by copying out the former’s stories. “Writing is the only way I’ve found that I can be aggressive,” she once said. “I’m totally in control of this tiny, tiny world.” ~

https://www.irishtimes.com/culture/books/joan-didion-american-journalist-and-author-dies-at-age-87-1.4763144

Oriana:

I admired Didion’s laser-like intelligence. Yes, she did provide clarity. The Year of Magical Thinking is extraordinary.

She lived a writer’s life to the fullest, leaving behind much admirable prose — admirable not only because of its spare style but also for its cultural insights.

Didion died this past Thursday, December 23, aged 87. How quickly we now learn of our losses. But because I watched my father die of Parkinson's, I am glad she got released.

*

BEING THE RICARDOS: DON’T EXPECT COMEDY; IT’S A VERY TENSE. SAD MOVIE

Oriana:

I think the average viewer goes to this movie, a Christmas-time release, expecting a comedy — or at least a good deal of humor. Instead, we get slapped in the face with the story of an unhappy marriage. The last word on the screen is “divorce.”

And basically all the important characters in the movie are unhappy. Now, that could be much easier to watch if we had more videos from the real “I Love Lucy” show. That would introduce nostalgic laughter and explain why the show achieved a stunning popularity.

As is, the movie is a heavy drama, sometimes sinking under its weight. There are several excellent scenes, but overall, there is no magic.

Though Kidman is competent, she is miscast as Lucy. She is all anorexic angles against Lucy’s comfortable curves. Javier Bardem, though, even if he too is unlike the real-life Desi Arnaz, has enough charisma to make us understand why women would throw themselves at him. A womanizing charmer/"Latin lover," he makes that stereotype come to life. He also delivers a pleasant surprise once we understand that he really does love Lucy and is protective of her.

But funny? No, he isn't funny in this movie. This is a movie in which no one is happy or funny.

*

“A FILMED WIKIPEDIA PAGE”

~ With Sorkin the writer also serving as director, Being the Ricardos was doomed from the start. There are moments when the movie pops and the filmmaker seems in sync with his cast, his cast seems in sync with one another, and the intended sparks fly. But they’re fleeting. Sorkin stalls the film’s urgency with endless flashbacks and flash-forwards, with characters frequently restating (and overstating) ideas and emotions we’ve just seen dramatized. And when he comes up emotionally short, he resorts to a hoary, obvious score (by the usually dependable Daniel Pemberton). The whole thing is strangely lifeless as a result, a museum piece, a carefully curated display of old-timey television with nothing much at stake.

Being the Ricardos takes as its hook a short-lived scandal: In 1952, star Lucille Ball was investigated by the House Un-American Activities Committee for nebulous ties to the Communist Party in her youth, a bit of gossip leaked by the notorious columnist Walter Winchell. This upended Ball’s life for a week in the midst of I Love Lucy’s domination, threatening to bring the show, as well as the careers of its stars, Ball (Nicole Kidman) and husband, business partner, and co-star Desi Arnaz (Javier Bardem), to a hasty conclusion. “It was a scary time,” explain the actors playing the show’s writers Jess Oppenheimer (Tony Hale), Bob Carroll Jr. (Jake Lacy), and Madelyn Pugh (Alia Shawkat), whose recollections illuminate that stressful week. It’s the kind of dual (warring, perhaps) storytelling device that Sorkin loves, a chance to spin several plates at once.

The trouble is he isn’t a graceful enough director to execute such narrative acrobatics. Much of the couple’s backstory — Ball’s frustrated attempts at movie stardom, the fiery attraction between her and Arnaz, the logistics of careers that initially kept them apart — is dramatized capably, firmly rooted in old Hollywood history while invested in the complex politics of navigating show business as a headstrong woman. But Sorkin stuffs in complications that occurred elsewhere in the I Love Lucy timeline, including gossip rags running stories of Desi’s infidelity and the battle to work Lucy’s pregnancy into the show, turning the movie’s scope into what she calls “a compound fracture of a week.” The story could have succinctly captured Lucy and Desi’s lives and relationship via the earth-shattering events of this confined period, but the copious cuts forward and backward in time keep undermining that potential. Being the Ricardos turns into a filmed Wikipedia page, too flighty and shallow to give us any real emotional insight or to add to I Love Lucy’s well-known lore.

It can be dry as a Wikipedia page, too. This is a film about one of the funniest people of the 20th century. Yet presented with Ball’s unique flair for pratfalls and punch lines, Sorkin dwells instead on a laser-focused Kidman thinking her bits of business through.

That said, the longtime television pro knows what bickering over business at the table read and power plays in rehearsals look and sound like, and he nails the rivalries and running jokes that become part of the work environment. Of particular note is the subplot concerning Nina Arianda’s Vivian Vance, who considered herself more of a pretty ingenue than a frumpy sidekick. Arianda and Kidman flesh out the prickly dynamic between Vance and Ball and Vance’s ongoing distaste with her place on the show. It’s a fascinating footnote, sympathetically portrayed.

The sturdy cast of supporting players and character actors get their arms around Sorkin’s stylized dialogue with ease. His customary rat-tat-tat rhythms don’t feel too contemporary here, indebted as they are to the screwball comedies of an earlier era. J.K. Simmons proves the picture’s MVP, envisioning his William Frawley as a mixture of merciless insult comic and seen-it-all showbiz cynic. Clark Gregg (as CBS exec Howard Wenke), Alia Shawkat, Jake Lacy, and Tony Hale all make the most of their limited screen time.

The central performers have more trouble. Both Kidman and Bardem are a good decade too old for their roles. Hair aside, Kidman just doesn’t look much like Ball (and the attempts to make her look like Lucy with the help of prosthetics just underscore that point), and she can’t do slapstick. It can be downright eerie to watch as Kidman, stone-faced, attempts classic sequences like the beloved stomping of the grapes and falls flat. She just slogs through it, seemingly embarrassed by the endeavor. ~

https://www.vulture.com/article/movie-review-aaron-sorkins-being-the-ricardos.html

Oriana:

I agree that the scenes between “Lucy” and “Ethel” were the best in the movie, and, for me, saved the movie from being trivial and forgettable. “Ethel’s” complaining that the show makes her fat and ugly, wearing ugly dresses, and having an old man as her husband.

There is also another good proto-feminist scene where “Lucy” complains about the show’s presenting her as dumb, and the script writer explaining that yes, for the sake of comedy the show infantilizes the main character. She mustn’t show how smart she actually is.

With more such scenes, we would have an excellent, uncannily relevant movie. I'm not saying that the movie is bad — but it could be a lot better.

And I have to admit that despite its flaws, the movie has a haunting quality. If you crave holiday cheer, avoid it — but otherwise, I wouldn’t discourage anyone from seeing it.

*

I LOVE LUCY, BUT NOT HERE

~ Being the Ricardos ostensibly takes place during the production of one episode, from the Monday table read of the script to the Friday taping of the show in front of a live audience, but it’s almost incidental to the many, many other crises and conflicts that Sorkin piles on in his script, which is filled with so many flashbacks and flash forwards that one soon becomes as dizzy as the Lucy Ricardo character whom Ball portrayed so brilliantly for six seasons in the 1950s.

In a way, the movie’s title is wildly inaccurate, because the film resolutely shows us Ball and Arnaz as mostly anything but the Ricardos. That’s not a slam on the film because this is a deliberate choice Sorkin makes, and the lives and careers of both these pioneers are clearly much more rich and interesting than the comedic archetypes they portray. But even with terrific performances from the main cast–Nicole Kidman as Ball, Javier Bardem as Arnaz, J.K. Simmons and Nina Arianda as co-stars William Frawley and Vivian Vance–it’s hard to get a sense of what story Sorkin is trying to tell.

The ultimate problem with Being the Ricardos is that despite the hard work of its cast and Sorkin’s good intentions–his dialogue is as sharp as always and he never met a social issue he didn’t want to righteously incorporate into whatever script he’s working on–there are too many things happening at once for the viewer to get a real grip on who these people are, even if they’re among the most famous faces in pop culture history. (It also doesn’t help that Sorkin and cinematographer Jeff Cronenweth shoot most of the movie in dark offices or shadowy soundstages.) You may still love Lucy after watching this–as you should–but it’s hard to find a lot to love in Being the Ricardos. ~

https://www.denofgeek.com/movies/being-the-ricardos-review-i-love-lucy/

Oriana:

I agree that it’s hard to get the sense of the main story here. For me the strongest theme is Lucy’s shattered dream of a happy home. The word “home” has a terrific importance to her, but not to her husband, who’d rather fulfill the macho stereotype of a Latin-lover-womanizer — in no way just a supporting character. If the movie focused on “shattered dreams,” it would at least be coherent.

Let me finish with an excerpt of a particularly negative review in The Irish Times:

~ Beneath the snappy dialogue, there’s little spark or insight. Kidman simultaneously evokes Rosalind Russell and Marie Curie as Ball. It’s a fine performance but any resemblance to characters living or dead – including Lucille Ball – is purely coincidental.

Sorkin has said that he’s not a particular fan of I Love Lucy’s brand of slapstick and Being the Ricardos goes out of its snooty way to avoid anything as vulgar as Lucille Ball’s comedy, save for a very brief glimpse of the famous grape-stomping scene. The film’s obsession with process means we’re never getting to drink the wine. ~

https://www.irishtimes.com/culture/film/being-the-ricardos-a-sticky-situation-but-not-much-comedy-1.4752275

Deborah:

Nicole Kidman is a terrible Lucille Ball, just awful in my opinion. Kidman is not a funny actress, and it really shows in this movie. She

has that snow-maiden, ready for my close up seriousness that just does

not equal the full body performances and comedy of Lucille Ball.

Oriana:

I agree. She’s good in the feminist scenes, but she’s not a believable Lucy.

Nicole Kidman and Javier Bardem as Lucille Ball and Desi Arnaz

*

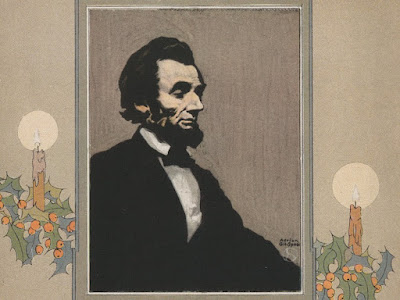

LINCOLN’S LAST CHRISTMAS

~ The character of American Christmas changed as a result of the Civil War

President Lincoln's final Christmas was a historic moment. The telegram he received from General William Tecumseh Sherman signaled that the end of the Civil War was near. But as Lincoln's personal Christmas story reveals, those conflict-filled years also helped shape a uniquely American Christmas.

Sherman’s telegram to the president, who had been elected to a second term only a month before, read “I beg to present you, as a Christmas gift, the city of Savannah, with 150 heavy guns and plenty of ammunition, and also about 25,000 bales of cotton.”

“Washington celebrated with a 300-gun salute,” writes the Wisconsin State Journal. This victory signaled that the end of the long, bloody war that shaped Lincoln’s presidency and the country was likely near. Lincoln wrote back: “Many, many thanks for your Christmas gift—the capture of Savannah. Please make my grateful acknowledgements to your whole army—officers and men.”

Although it separated many from their families, permanently or temporarily, the Civil War also helped to shaped Americans’ experience of Christmas, which wasn’t a big holiday before the 1850s. “Like many other such ‘inventions of tradition,’ the creation of an American Christmas was a response to social and personal needs that arose at a particular point in history, in this case a time of sectional conflict and civil war,” writes Penne Restad for History Today.

By the time of the war, Christmas had gone from being a peripheral holiday celebrated differently all across the country, if it was celebrated at all, to having a uniquely American flavor.

“The Civil War intensified Christmas’s appeal,” Restad writes. “Its celebration of family matched the yearnings of soldiers and those they left behind. Its message of peace and goodwill spoke to the most immediate prayers of all Americans.

This was true in the White House, too. “Lincoln never really sent out a Christmas message for the simple reason that Christmas did not become a national holiday until 1870, five years after his death,” writes Max Benavidez for Huffington Post. “Until then Christmas was a normal workday, although people did often have special Christmas dinners with turkey, fruitcake and other treats.”

During the war, Lincoln made Christmas-related efforts–such as having cartoonist Thomas Nast draw an influential illustration of Santa Claus handing out Christmas gifts to Union troops, Benavidez writes. But Christmas itself wasn't the big production it would become: In fact, the White House didn't even have a Christmas tree until 1889. But during the last Christmas of the war–and the last Christmas of Lincoln's life–we do know something about how he kept the holiday.

On December 25, the Lincolns hosted a Christmas reception for the cabinet, writes the White House Historical Society. They also had some unexpected guests for that evening’s Christmas dinner, the historical society writes. Tad Lincoln, the president’s rambunctious young son who had already helped inspire the tradition of a Presidential turkey pardon, invited several newsboys —child newspaper sellers who worked outdoors in the chilly Washington winter—to the Christmas dinner. “Although the unexpected guests were a surprise to the White House cook, the president welcomed them and allowed them to stay for dinner,” writes the historical association. The meal must have been a memorable one, for the newsboys at least. ~

https://www.smithsonianmag.com/smart-news/president-lincolns-last-christmas-180967617/?utm_source=facebook.com&utm_medium=socialmedia&fbclid=IwAR1f7WqhmoBsgerYjBSbQqVYOyxPjXviTjztMnnKQ-rfvO25tD4uss8az3w

JOSEPH MILOSCH: THE REAL WAR ON CHRISTMAS

When I hear conservatives say there is a war on Christmas, I take issue with their grammar. There is no war on Christmas, but there was a war. Wall Street waged it from 1920 until its victory in 1965. The Christians lost the crusade. When they are informed about their loss, they deny it. Christmas used to begin four weeks before the first day of Christmas or Christmas Day. In theory, it would continue for twelve days of spiritual reflections on the meaning of Christ’s life.

The Christmas season ended on the day the Magi arrived at the manger. The Three wise men’s arrival is important to Christians because it symbolizes the gentiles’ inclusion in God’s plans. For many European immigrants, the Feast of the Epiphany was the day they opened their gifts. In 1953, I was in the first grade, and out of thirty students, fifteen of them received their presents on Old Christmas. By the time I was a senior in high-school, 1965, my classmates did not celebrate Little Christmas.

Why did the old tradition fade away? In 1920 an advertising agent prophesied a sales increase if the country celebrated only one day of Christmas. He chose Christmas day because it was the first day of the Christmas season. Furthermore, he figured that starting sales a few weeks ahead of the holiday increased the pressure to buy and give gifts. This year more stores started their Christmas sales a week before Halloween when advertising agencies ask consumers to Get a Jump on Black Friday. Some stayed open on Thanksgiving.

On the other hand, Christians believe the Christmas Season is a time of rejoicing and self-reflection. For Catholics, along with some other denominations, it begins with Advent. This four-week period begins four Sundays before Christmas and ends on Christmas day. Advent is the time to prepare spiritually for Christmas day and carry the preparation through the Twelve Days of Christmas. The composer penned the song as a spiritual resistance guide to the oppression by the dominant religion, The Anglican Church.

Based on a memory-forfeit game, the song originated after the disposal of the last English Catholic King of England. At that time, acknowledging your Catholic faith was punishable by death. Catholics used the song to communicate their beliefs. Today, those ideas are interdenominational. During the Christmas Season, the faithful decipher the metaphors and employ them in prayer. On the first day, the devoted used the first tenet, and during the second day, the faithful employed the first two items.

This sequence continues until Little Christmas; then, the prayers include all twelve beliefs. This technique is similar to one found in gospel songs written by African-American slaves. They used lyrics as a means to resist oppression. The partridge in a pear tree refers to Christ crucified on the Cross. Thus, the birth of Christ leads to his death. It is an act performed for our salvation and gives Christmas its meaning.

The Two Turtle Doves stand for God’s everlasting friendship and love, which God shows by allowing the execution of his son. The Tree French Hens stands for faith, hope, and charity. The Four Calling Birds are either the Four Gospels or their authors: Mathew, Mark, Luke, and John. Five Golden Rings are the Five Books of the Old Testament. Six Geese a-laying are the days of Creation, and the Seven Swans a-swimming are the Holy Spirit’s blessings: prophecy, service, teaching, encouragement, giving, mercy, and leadership.

Eight maids a-miking are the beatitudes. The nine ladies dancing are the nine fruits of the Holy Spirit.: Love, joy, peace, patience, kindness, goodness, faithfulness, modesty, and self-control. The ten lads a-leaping is the Ten Commandments. The Eleven pipers are the eleven loyal apostles, and the twelve drummers drumming are the twelve points in the Apostles’ creed. Today, most Christians are not aware of the meaning of the lyrics, though they accept the beliefs expressed in the song.

Instead of practicing prayer and meditation to prepare for Christmas, we shop for gifts, food, and alcohol. Although many charity organizations and churches initiate food drives for the poor, it seems Christians act contrary to the spirit of Christmas. When we think of Christ’s birthday, we think about stores, who hire more security guards to protect themselves against shoplifters. During the holiday season, police departments increase their overtime to handle the escalation in theft, vandalism, and drunkenness.

Hospitals expand emergency room staff to deal with the surge in shootings and stabbings. TV commercials show us how to deal with family arguments, drunkenness, and abuse. Another commercial depicts people stuffing themselves with the Christmas meal before asking for food donations and gifts for the poor. These commercials seem to encourage us to force the poor to share in our day of gluttony — as if we enjoy rubbing the faces of the unfortunate in the poverty in which they live.

We give gifts to underprivileged children that their parents can’t afford. Then, we ignore their needs for the rest of the year. On Christmas Eve, the news posts all the sobriety checkpoints so we can avoid them. The TV is full of sporting events that depict an abundance of presents, drinks and food. While Christians unwrap their gifts, eat, and drink, the news uses the stores’ profits during the Christmas season to judge whether Christmas was successful.

On December twenty-fifth, the actions of Christians indicate less a commemoration of Jesus’s birth than a celebration of greed, gluttony, and drunkenness in the name of Christ. As they prepare for bed, they continue to complain about the war on Christmas. Then they consume Tums to relieve the gas and heartburn building up from eating and drinking to the extreme. Imagine what kind of country the United States would be if we practiced the meditation prayer depicted in The Twelve Days of Christmas?

Oriana:

“The partridge in a pear trees” is Christ on the Cross? This astonished me and I rushed to google the song. Sure enough: The partridge in a pear tree is symbolic of Christ upon the Cross. In the song, He is symbolically presented as a mother partridge because she would feign injury to decoy a predator away from her nest. She was even willing to die for them.

The tree is the symbol of redemption.

https://news.hamlethub.com/coscob/life/214-the-first-day-of-christmas-revealed

But there are also the dissenting voices, pointing to secular meanings such as fertility. The “calling birds” were originally “cooly birds,” meaning blackbirds (though in this instance spared being baked in a pie). In keeping with the bird motif, the five gold rings might refer to gold-ringed pheasants. The song is secular, loosely speaking a humorous courtship song, according to https://www.vox.com/21796404/12-days-of-christmas-explained

Though the song always struck me as a loony-tunes kind of annoying folklore, I can go along with the Catholic explanation. There are always magical numbers ascribed to sins and virtues alike, so . . . whatever works. And I agree that any spiritual practice would be preferable to the commercial orgy that modern Christmas has become.

Mary:

Oriana:

I can't help but see The Twelve Days of Christmas as too humorous to be a serious reminder of Catholic teachings. Christ as a partridge does not sit well with me. Sooner, indeed, the pelican, though it's of course a myth about its feeding its young with its blood.

It's been a while that I've heard the song. Maybe it's past its peak of popularity. I hope so, since, let's face it, it's so tedious and annoying. As Christmas carols go, I wish this one a happy oblivion.

*

THE EASIEST WAY TO IMPROVE YOUR MEMORY

~ When trying to memorize new material, it’s easy to assume that the more work you put in, the better you will perform. Yet taking the occasional down time – to do literally nothing – may be exactly what you need. Just dim the lights, sit back, and enjoy 10-15 minutes of quiet contemplation, and you’ll find that your memory of the facts you have just learnt is far better than if you had attempted to use that moment more productively.

Although it’s already well known that we should pace our studies, new research suggests that we should aim for “minimal interference” during these breaks – deliberately avoiding any activity that could tamper with the delicate task of memory formation. So no running errands, checking your emails, or surfing the web on your smartphone. You really need to give your brain the chance for a complete recharge with no distractions.

An excuse to do nothing may seem like a perfect mnemonic technique for the lazy student, but this discovery may also offer some relief for people with amnesia and some forms of dementia, suggesting new ways to release a latent, previously unrecognized, capacity to learn and remember.

The remarkable memory-boosting benefits of undisturbed rest were first documented in 1900 by the German psychologist Georg Elias Muller and his student Alfons Pilzecker. In one of their many experiments on memory consolidation, Muller and Pilzecker first asked their participants to learn a list of meaningless syllables. Following a short study period, half the group were immediately given a second list to learn – while the rest were given a six-minute break before continuing.

When tested one-and-a-half-hours later, the two groups showed strikingly different patterns of recall. The participants given the break remembered nearly 50% of their list, compared to an average of 28% for the group who had been given no time to recharge their mental batteries. The finding suggested that our memory for new information is especially fragile just after it has first been encoded, making it more susceptible to interference from new information.

Although a handful of other psychologists occasionally returned to the finding, it was only in the early 2000s that the broader implications of it started to become known, with a pioneering study by Sergio Della Sala at the University of Edinburgh and Nelson Cowan at the University of Missouri.

The team was interested in discovering whether reduced interference might improve the memories of people who had suffered a neurological injury, such as a stroke. Using a similar set-up to Muller and Pilzecker’s original study, they presented their participants with lists of 15 words and tested them 10 minutes later. In some trials, the participants remained busy with some standard cognitive tests; in others, they were asked to lie in a darkened room and avoid falling asleep.

The impact of the small intervention was more profound than anyone might have believed. Although the two most severely amnesic patients showed no benefit, the others tripled the number of words they could remember – from 14% to 49%, placing them almost within the range of healthy people with no neurological damage.

The next results were even more impressive. The participants were asked to listen to some stories and answer questions an hour later. Without the chance to rest, they could recall just 7% of the facts in the story; with the rest, this jumped to 79% – an astronomical 11-fold increase in the information they retained.

The researchers also found a similar, though less pronounced, benefit for healthy participants in each case, boosting recall between 10 and 30%.

Della Sala and Cowan’s former student, Michaela Dewar at Heriot-Watt University, has now led several follow-up studies, replicating the finding in many different contexts. In healthy participants, they have found that these short periods of rest can also improve our spatial memories, for instance – helping participants to recall the location of different landmarks in a virtual reality environment.

Crucially, this advantage lingers a week after the original learning task, and it seems to benefit young and old people alike. And besides the stroke survivors, they have also found similar benefits for people in the earlier, milder stages of Alzheimer’s disease.

In each case, the researchers simply asked the participants to sit in a dim, quiet room, without their mobile phones or similar distractions. “We don’t give them any specific instructions with regards to what they should or shouldn’t do while resting,” Dewar says. “But questionnaires completed at the end of our experiments suggest that most people simply let their minds wander.”

Even then, we should be careful not to exert ourselves too hard as we daydream. In one study, for instance, participants were asked to imagine a past or future event during their break, which appeared to reduce their later recall of the newly learnt material. So it may be safest to avoid any concerted mental effort during our down time.

The exact mechanism is still unknown, though some clues come from a growing understanding of memory formation. It is now well accepted that once memories are initially encoded, they pass through a period of consolidation that cements them in long-term storage. This was once thought to happen primarily during sleep, with heightened communication between the hippocampus – where memories are first formed – and the cortex, a process that may build and strengthen the new neural connections that are necessary for later recall.

This heightened nocturnal activity may be the reason that we often learn things better just before bed. But in line with Dewar’s work, a 2010 study by Lila Davachi at New York University, found that it was not limited to sleep, and similar neural activity occurs during periods of wakeful rest, too. In the study, participants were first asked to memorize pairs of pictures – matching a face to an object or scene – and then allowed to lie back and let their minds wander for a short period. Sure enough, she found increased communication between the hippocampus and areas of the visual cortex during their rest. Crucially, people who showed a greater increase in connectivity between these areas were the ones who remembered more of the task, she says.

Perhaps the brain takes any potential down time to cement what it has recently learnt – and reducing extra stimulation at this time may ease that process. It would seem that neurological damage may render the brain especially vulnerable to that interference after learning a new memory, which is why the period of rest proved to be particularly potent for stroke survivors and people with Alzheimer’s disease.

Other psychologists are excited about the research. “The effect is quite consistent across studies now in a range of experiments and memory tasks,” says Aidan Horner at the University of York. “It’s fascinating.” Horner agrees that it could potentially offer new ways to help individuals with impairments to function.

Practically speaking, he points out that it may be difficult to schedule enough periods of rest to increase their overall daily recall. But he thinks it could still be valuable to help a patient learn important new information – such as learning the name and face of a new caretaker. “Perhaps a short period of wakeful rest after that would increase the chances that they would remember that person, and therefore feel more comfortable with them later on.” Dewar tells me that she is aware of one patient who seems to have benefitted from using a short rest to learn the name of their grandchild, though she emphasizes that it is only anecdotal evidence.

Thomas Baguley at Nottingham Trent University in the UK is also cautiously optimistic. He points out that some Alzheimer’s patients are already advised to engage in mindfulness techniques to alleviate stress and improve overall well-being. “Some [of these] interventions may also promote wakeful rest and it is worth exploring whether they work in part because of reducing interference,” he says, though it may be difficult to implement in people with severe dementia, he says.

Beyond the clinical benefits for these patients, Baguley and Horner both agree that scheduling regular periods of rest, without distraction, could help us all hold onto new material a little more firmly. After all, for many students, the 10-30% improvements recorded in these studies could mark the difference between a grade or two. “I can imagine you could embed these 10-15 minute breaks within a revision period,” says Horner, “and that might be a useful way of making small improvements to your ability to remember later on.”

In the age of information overload, it’s worth remembering that our smartphones aren’t the only thing that needs a regular recharge. Our minds clearly do too.

https://www.bbc.com/future/article/20180208-an-effortless-way-to-strengthen-your-memory

Oriana:

This is excellent information. Now if it only became easier for those of us who love to work hard (all right, go ahead and call us workaholics) to take do-nothing breaks.

*

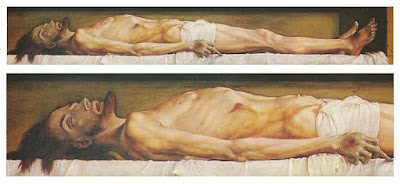

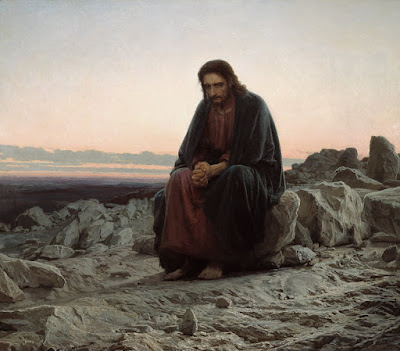

DOSTOYEVSKY’S PROBLEM WITH HOLBEIN’S REALISTIC PAINTING OF DEAD CHRIST: HUMAN, ALL TOO HUMAN?

~ Much of The Idiot was written while Dostoevsky and his wife were living in Florence, just a stone’s throw away from the Pitti Palace, where the writer often went to see and to admire the paintings that adorned its walls, singling out Raphael’s Madonna della Seggiola (Madonna of the Chair) for special mention. It is very probably no coincidence that visual images play a prominent role in The Idiot.

Early on in the narrative, Prince Myshkin, the eponymous ‘idiot’, sees a photograph of the beautiful Anastasia Phillipovna that makes an extraordinary impression on him and generates a fascination that will end with her death and his madness. But insofar as Anastasia Phillipovna is the epitome of human beauty in the world of the novel, this photograph can also serve as a visual aide to the saying attributed to the Prince, that ‘beauty will save the world’.

Later, he is confronted with an image of a very different kind — Hans Holbein’s 1520-22 painting of the dead Christ, shown with unflinching realism and reportedly using the body of a suicide as model. It is a Christ stripped of the beauty that bourgeois taste regarded as an essential attribute of his humanity and, in its unambiguous, mortality, devoid also of divinity.

On first seeing it, Myshkin comments that a man could lose his faith looking at such a picture and, later, the despairing young nihilist, Hippolit, declares that just this picture reveals Christ’s powerlessness in face of the impersonal forces of nature and the necessity of death that awaits every living being. It is, Hippolit suggests, an image that renders faith in resurrection impossible.

These two images can be seen as establishing the visual parameters for a complex interplay of the themes of beauty, death, and divinity that run through the novel as a whole and that go a long way to structuring the conceptual — and religious — drama at its heart. This drama is also, crucially, at the center of then contemporary European debate about Christ and about the representation of Christ. But these are not the only images that contribute to Dostoevsky’s take on that debate. It has been suggested that the opening description of Myshkin is modeled on the canonical icon of Christ in Orthodox tradition and much of the novel’s theological force has to do with the eclipse of Myshkin’s icon-like identity in the encounter with a modern Russia in the grip of a capitalist revolution.

On his arrival in St Petersburg from the West, Myshkin goes to call on his distant relative, Mme Epanchina ( it is in her husband’s office that he sees the photograph of Anastasia Phillipovna). Mme Epanchina’s oldest daughter Adelaida is a keen amateur landscape painter and over breakfast Myshkin rather inappropriately suggests that the face of a man in the moment of being guillotined might make a suitable subject for her painting. And, finally, there is an imaginary painting of Christ that Anastasia Phillipovna ‘paints’ in one of her letters to Aglaia Epanchina to whom, by this point, Myshkin has become engaged. It is this ‘painting’ that is the main focus of this paper, in part because it has been under-discussed in secondary literature in comparison with Anastasia’s photograph and Holbein’s dead Christ but also because it makes an important contribution to the debate about Christ and about how to represent Christ that, as we have seen, is central to the religious questions at issue in the novel.

This picture is, of course, painted in words and not an actual painting, but Anastasia Phillipovna describes it as if it were a painting and she clearly wants Aglaia too to see it that way. Dostoevsky thus invites readers too to imagine it as a picture they might see in a gallery. Anastasia Phillipovna depicts Christ as sitting, alone, accompanied only by a child, on whose head he ‘unconsciously’ rests his hand, while ‘looking into the distance at the horizon; thought, great as the world, dwells in His eyes. His face is sorrowful’. The child looks up at him, the sun is setting.

On first reading, the portrait might not seem very unusual. It is the kind of portrait that we have become rather used to. Christ sitting with children is a subject familiar from innumerable popular Christian books and devotional pictures. Yet in the 1860s images of Christ sitting and of Christ sitting with children were both equally innovative, having relatively few precedents in earlier iconography.

Unsurprisingly, the theme becomes much more common with the rise of romanticism and a new more positive evaluation of childhood and the idea that children had a special affinity with the divine, with notable examples from Benjamin West, William Blake, and Charles Lock Eastlake and, by the mid-Victorian era, it had become a widespread and popular topic .Unlike in earlier representations, Christ is now to be seen alone with varying numbers of children, unaccompanied by a crowd of mothers and disciples. This tendency becomes especially prominent in illustrated Bible stories specifically for children—another phenomenon of the nineteenth century.

In a sense, the reason for the new prominence of these themes is not hard to fathom. It reflects a turn to the human Jesus and a new emphasis on the role of feeling in religious life. The Christ of ecclesiastical tradition was Savior by virtue of the ontological power of the hypostatic union, uniting divine and human in the very person of his being. It is this identity as both divine-and-human that makes it possible for his innocent suffering on the cross to be salvific rather than merely tragic. In the wake of romanticism, however, his qualification as Savior has to do with his uniquely intense God-consciousness and his unrestricted empathy with other human beings, an empathy that extends even to their suffering and sin.

Ernest Renan describes Jesus’s religion as ‘a religion without priests and without external practices, resting entirely on the feelings of the heart, on the imitation of God, on the immediate rapport of [human] consciousness with the heavenly Father’. Renan’s characteristically 19th century bourgeois assumptions led him to see women as being especially susceptible to ‘the feelings of the heart’ and it was therefore no surprise that ‘women received him eagerly’. ‘[W]omen and children adored him’ and

… the nascent religion was thus in many respects a movement of women and children … He missed no occasion for repeating that children are sacred beings, that the Kingdom of God belongs to children, that we must become as children in order to enter it, that we must receive it as a child, and that the Father conceals his secrets from the wise and reveals them to the little one. He almost conflates the idea of discipleship with that of being a child … It was in effect childhood, in its divine spontaneity, in its naïve bursts of joy, that would take possession of the earth.

How do these themes resonate with the action and personalities of The Idiot? Clearly, Anastasia Phillipovna’s ‘portrait’ of Christ lives in the atmosphere of Renan’s and similar humanist-sentimental lives of Jesus. But what does this mean for the novel’s possible contribution to the religious understanding of Christ as a whole?

The historical Lives of Jesus movement had its scandals, but the parallel moves in art also provoked bemusement and sometimes hostility, as in the case of other new developments in nineteenth century art. The novelty of this new view of Christ can be seen by reactions to Ivan Kramskoy’s painting of Christ in the wilderness. Tolstoy would later say of it that it was ‘the best Christ I know’, but many of the reactions to it were far more negative. For many this was a Christ devoid of divinity, a manifestation of historicist positivism in art. ‘Whoever he is,’ Ivan Goncharov continues, ‘he is without history, without any gifts to offer, without a gospel … [Christ] in his worldly, wretched aspect, on foot in a corner of the desert, amongst the bare stones of Palestine … where, it seems, even these stones are weeping!

What kenotic Christology refers to as Christ’s state of exinanition (i.e., his human state of weakness and vulnerability, emptied of his divine attributes), is thus becoming a theme of contemporary art at the time of Dostoevsky’s composition of The Idiot, paralleling to some extent the development of the historical portrayal of the life of Jesus. Both in historiography and art the same question then arises, namely, how, if Jesus is portrayed as fully human, can his divinity be rescued from the manifestation of what is visibly all-too human?

The sitting Christ, absorbed in brooding thoughts and given over to melancholy, seems to be a Christ who is in the process of becoming all-too human. In this regard, it is noteworthy that where Renan’s Christ goes alone to look out over Jerusalem at sunrise, Anastasia Phillipovna’s Christ is pictured at sunset. Her [verbal] portrait reveals the shadow side of Renan’s optimism. For her, the light is fading, as natural light always must.

But what does this tell us about Dostoevsky’s novel? Firstly, it underlines the contemporaneity of Dostoevsky’s visual vocabulary deployed in the novel. Not only is this one of the first novels in which a photograph (the photographic portrait of Anastasia Phillipovna) plays a major role, but the image of the sitting Christ, accompanied only by a child, reflects contemporary developments in religious art that are also further connected with contemporary historiography. Holbein’s dead Christ is, of course, a picture from an earlier age. However, on the one hand, it offers a ne plus ultra of the humanizing approach to Jesus and, on the other it is a theme we also find in Manet’s Dead Christ with Angels, exhibited alongside his better-known Olympia in the same year that The Idiot was published. Like Kramskoy’s painting, this was seen by many critics as sacrilegious and an affront to faith by virtue of the elimination of all elements of beauty and conventional sacrality. Visually, as well as in literary terms, Dostoevsky is entirely in synchronization with the decisive movements of the visual culture of his time.

Manet: Dead Christ with Angels

In fact, commenting in 1873 on Nicholas Gé’s Mystic Night (which, as we have seen, also attracted Goncharov’s attention), Dostoevsky showed himself to be alert to the risks of a one-sidedly humanizing and sentimental approach to Christ in art. In a review published in The Citizen he writes:

Look attentively: this is an ordinary quarrel among most ordinary men. Here Christ is sitting, but is it really Christ? This may be a very kind young man, quite grieved by the altercation with Judas, who is standing right there and putting on his garb, ready to go and make his denunciation, but it is not the Christ we know. The Master is surrounded by His friends who hasten to comfort Him, but the question is: where are the succeeding eighteen centuries of Christianity, and what have these to do with the matter? How is it conceivable that out of the commonplace dispute of such ordinary men who had come together for supper, as this is portrayed by Mr. Gué [sic], something so colossal could have emerged?

Secondly, setting Nastasya’s portrait of Christ in an art-historical context may not directly solve the question as to whether Myshkin is to be regarded as some kind of Christ-figure (and, if so, what kind) but it does illuminate how Anastasia Phillipovna sees him. We know that she reads much and is given to speculative ideas, and it is therefore not at all surprising that her vision of Christ and of Myshkin as Christ is a vision taken from contemporary, humanist, Western sources, a sentimental Christ whose power to save is, at best, fragile. In this way, whether or not we are to read Dostoevsky’s portrayal of Myshkin as a Christ-figure, he is a Christ-figure, albeit a very particular kind of Christ-figure, for her. In any case, to the extent that Anastasia Phillipovna places her own hope of salvation in such a Christ-figure, it is doomed to fail.

There is one further iconographical intertext that is worth exploring in connection with Anastasia Phillipovna’s ‘portrait’, although it is not expressly mentioned. What is mentioned—Evgeny Pavlovitch mentions it—is that Myshkin’s relation to her might be seen as mirroring the gospel story of the woman taken in adultery and protected by Christ from being stoned to death (an association reinforced by the story Myshkin himself tells of the outcast Marie he had rescued from ostracism in the little Swiss village where he had lived before the start of the novel’s action).

This would imply that her deepest hope is, through Myshkin, to sit at the feet of Christ, listening to his word, taking the better part. But if this is her hope, then it is well-hidden, screened not only by the substitution of Aglaia for herself but also by the sentimental positivism of nineteenth century historicism that comes to expression in her portrait of a melancholy Christ contemplating the light of a setting sun. In this way, it may not only be her psychological injuries that make her incapable of accepting the forgiveness that Myshkin offers, it may also be her—and the age’s—misconception of Christ that gets in the way.

This, clearly, makes the issue less individual and less psychological. Arguably, it also makes it more tragic in a classical sense. This is because her fate is that of a whole world of values that, in this historical moment, is descending into the impending darkness. Yet—even if Dostoevsky himself does not say this—there might remain a chance, however slender, that this ‘human all-too human’ Christ retains a memory of another light and, with that memory, the hope that the values of this present age are not the sole values by which we and the world are to be judged.

https://www.eurozine.com/human-all-too-human/#footnote-5

Mary:

On Holbein's dead Christ and beauty, death and divinity...Don't we always make our gods beautiful?? The nature of Christ as savior is that he is both divine and human, at once and always. To depict him as the empty, fully human corpse, is to deny him as god, and without divinity he cannot function as savior. The same problem is there with the romanticized Christ figure, again, fully, or should I say, only, human.

And even the best, most saintly human, cannot be Savior, because he is not also and always at the same time divine.

I think I'm getting a theological headache!

Oriana:

Can somebody who's "only" human be a savior? It depends on the definition of a savior. Jonas Salk saved us from living in terror of polio. In my class there was a girl who'd been crippled by polio. True, it's a partial, physical salvation, but imagine . . .

Dostoyevsky stated that if he had to choose between truth (roughly synonymous with science) and Christ, he’d choose Christ. However, I suspect that once we are fully convinced that something is true, we can’t reject it because it’s more emotionally comforting to believe that if we go to church every Sunday then we’ll go to heaven’s eternal bliss. As one nun in the movie “The Innocents” says, “Faith is one minute of belief and twenty-four hours of doubt.” And as one literature professor said, “Dostoyevsky believed in God on Monday, but by Wednesday again he didn’t believe.” Such doubt-filled faith can no longer be a source of comfort.

But as for the beauty of religious art, that’s the one truly redeeming factor. Gothic cathedrals may be the monuments to intellectual error, but at least we can truly enjoy the rose windows, the riot of arches — whereas Holbein’s Dead Christ is hideous (though I admire Holbein’s courage in reaching for such realism). As the history of humanity goes, it’s just one of many ironies and oxymorons.

*

Gabriele Kuiznate. I chose this image simply because beauty is its own excuse. And let us hope it will indeed save the world.

*

“I understood at a very early age that in nature I felt everything I should feel in church but never did. Walking in the woods, I felt in touch with the universe and with the spirit of the universe." ~ Alice Walker

*

HOW ANESTHESIA CHANGED THE NATURE OF CHILDBIRTH

~ On December 27, 1845, a physician named Crawford W. Long gave his wife ether as an anesthetic during childbirth. This is the earliest use of ether in childbirth on record–but Long, who didn’t publish his results until the 1850s, spent his lifetime fighting to be recognized. Whatever it may have meant for his career, this event marked the beginning of a new era in childbirth–one where the possibility of pain relief was available.

When Long did this, he had already used ether on a friend, writes anesthesiologist Almiro dos Reis Júnior, to remove infected cysts from his neck. Long had experience with the substance from so-called “ether parties” where young people would knock each other out for fun. However, the public was skeptical of knocking people unconscious during surgery, so Long stopped using ether in his clinic. “But Long still believed in the importance of anesthesia and administered ether to his wife during the birth of his second child in 1845 and other subsequent deliveries, thus undoubtedly becoming the pioneer of obstetric analgesia,” writes dos Reis Júnior.

Later in his life, Long tried to get credit for pioneering surgical anesthesia, a contentious claim that historians didn't recognize until recently. But he didn’t seek credit for obstetric anesthesia, writes historian Roger K. Thomas, even though “his use of ether with his wife predates by slightly more than a year that of the Scottish physician, James Y. Simpson, who is credited with the first obstetrical use of anesthesia.”

Simpson studied and taught at the University of Edinburgh, the first university in the world to have such a focus on gynecology and obstetrics, writes P.M. Dunn in the British Medical Journal. On January 19, 1847, he used ether in a difficult delivery. “He immediately became an enthusiastic supporter and publicist of its use, vigorously countering the arguments of those who suggested God had ordained that women should suffer during childbirth,” Dunn writes.

After some experimentation, Simpson concluded that chloroform was better than ether for use in childbirth. The first time he used chloroform to assist in a birth, the grateful parents christened their daughter Anesthesia.

The idea of anesthesia in childbirth caught on pretty quickly after this. In 1847, Fanny Longfellow, who was married to one of America’s most prominent poets, used ether during her delivery. Then in 1853, writes author William Camann, “Queen Victoria to relieve labor pain during the birth of Prince Leopold, ending any moral opposition to pain relief during childbirth.”

The idea of pain relief during surgery was unprecedented when surgeons started experimenting with it in the 1840s. For women, who routinely underwent agony to bear a child, the idea of birth without pain represented a new freedom. Following these innovations, writes Dunn, “women lobbied to assure pain relief during labor and sought greater control over delivery.” ~

https://www.smithsonianmag.com/smart-news/it-didnt-take-very-long-anesthesia-change-childbirth-180967636/

*

MOVING TOWARD A UNIVERSAL FLU VACCINE

~ Scientists at Scripps Research, University of Chicago and Icahn School of Medicine at Mount Sinai have identified a new Achilles' heel of influenza virus, making progress in the quest for a universal flu vaccine. Antibodies against a long-ignored section of the virus, which the team dubbed the anchor, have the potential to recognize a broad variety of flu strains, even as the virus mutates from year to year, they reported Dec. 23, 2021 in the journal Nature.

"By identifying sites of vulnerability to antibodies that are shared by large numbers of variant influenza strains we can design vaccines that are less affected by viral mutations," says study co-senior author Patrick Wilson, MD. The anchor antibodies we describe bind to such a site. The antibodies themselves can also be developed as drugs with broad therapeutic applications.”

Ideally, a universal influenza vaccine will lead to antibodies against multiple sections of the virus -- such as both the HA anchor and the stalk -- to increase protection to evolving viruses. ~

https://www.sciencedaily.com/releases/2021/12/211223113049.htm

*

ending on beauty:

The night ocean

shatters its black mirrors.

Waves shed their starry skins,

the foam hisses yess, yess.

What do astronomers know?

a handful of moons,

a Milky Way of facts:

every atom inside us

was once inside a star.

Star maps are within us,

private constellations

of House, Tree, Cat,

waiting to become

once again an alphabet of light —

on the blackboard of night sky

spelling out

This is your face

beyond your face,

the galaxies that spiral

through the lines of your palm

~ Oriana, Star Maps